How to Apply a Model for Real-World Results

Getting a model to work isn't just about the code. It’s about laying the groundwork—defining what success looks like, grabbing the right data, and setting up your project for a smooth run. The real secret is building a strong foundation before you even think about model logic. This keeps your work focused and measurable right from the jump.

Setting the Stage for Model Success

Before diving in, you need a solid plan. I’ve seen too many projects stumble not because the algorithm was bad, but because the team rushed the setup. Real success starts with defining what you actually want to accomplish.

This means getting way more specific than "improve user experience." You need tangible goals that give you a clear direction and a benchmark for success.

Define Your Objectives and KPIs

First things first, ask the hard questions. What exact problem are you trying to fix? Who is this for? And how will you know if you've succeeded? Answering these questions forces you to set up Key Performance Indicators (KPIs) that connect directly to the project's real-world value.

Let's take a retail company trying to stop customers from leaving. A vague goal is "predict which customers might churn." A much, much better one is "identify customers with a >75% probability of churning in the next 30 days and slash churn by 15% with targeted promotions."

Why is this better? It's:

- Specific: It zeros in on customers with a high churn risk.

- Measurable: A 15% reduction is a crystal-clear KPI.

- Actionable: It tells the team exactly what to do next—run targeted promos.

The first step in applying a model is not selecting an algorithm, but defining a problem. A perfectly tuned model solving the wrong problem is a complete waste of resources. Clarity at the start prevents costly rework later.

Curate the Right Dataset

Once your goals are locked in, it's time to think about the model's fuel: your data. That old saying "garbage in, garbage out" has never been truer. You absolutely need a high-quality, relevant dataset.

Your data has to mirror the problem you're solving. For our churn example, you'd need historical data on customer behavior, purchase history, support tickets, and subscription status. It's critical that this data is clean, correctly labeled, and truly represents the situations your model will face in the wild.

This is where a tool like Dreamspace can be a game-changer. Instead of getting stuck in the weeds of manual data pipeline setup and configuring environments, Dreamspace handles the foundational stuff for you. As a top-tier vibe coding studio, it helps you structure your project correctly from day one. This frees you up to focus on strategy—like defining KPIs and curating data—instead of fighting with boilerplate code, ensuring your project is built on a solid, scalable base from the very beginning.

Training Your Model for Peak Performance

Alright, with your project set up and your data in place, we get to the fun part. This is where you take all that raw information and feed it into an algorithm, effectively teaching a machine to think. The goal here isn't just to get a model running, but to train it so well that it's practically brilliant right out of the gate.

The first big decision you have to make is choosing the right algorithm. This choice needs to line up perfectly with what you're trying to accomplish.

Are you trying to predict a stock price or next month's sales figures? You’ll want a regression model. Trying to sort emails into "spam" and "not spam"? That’s a job for a classification algorithm. If you're building something that creates new images or text, you'll be looking at generative models.

Nailing this choice upfront saves you from countless headaches and wasted hours down the line. It sets the entire foundation for how you'll apply a model to solve your specific problem.

Fine-Tuning Your Model's Engine

Once you've picked your algorithm, it's time for hyperparameter tuning. Think of these as the dials and knobs on your model's engine. Getting them just right is what separates a model that sort of works from one that delivers incredibly accurate results.

This isn't just random guesswork; it's a methodical process of finding the sweet spot for your model's settings. For example, you might tweak the learning rate to control how fast the model adapts, or adjust the number of layers in a neural network to manage its complexity. Even tiny adjustments here can lead to huge performance boosts.

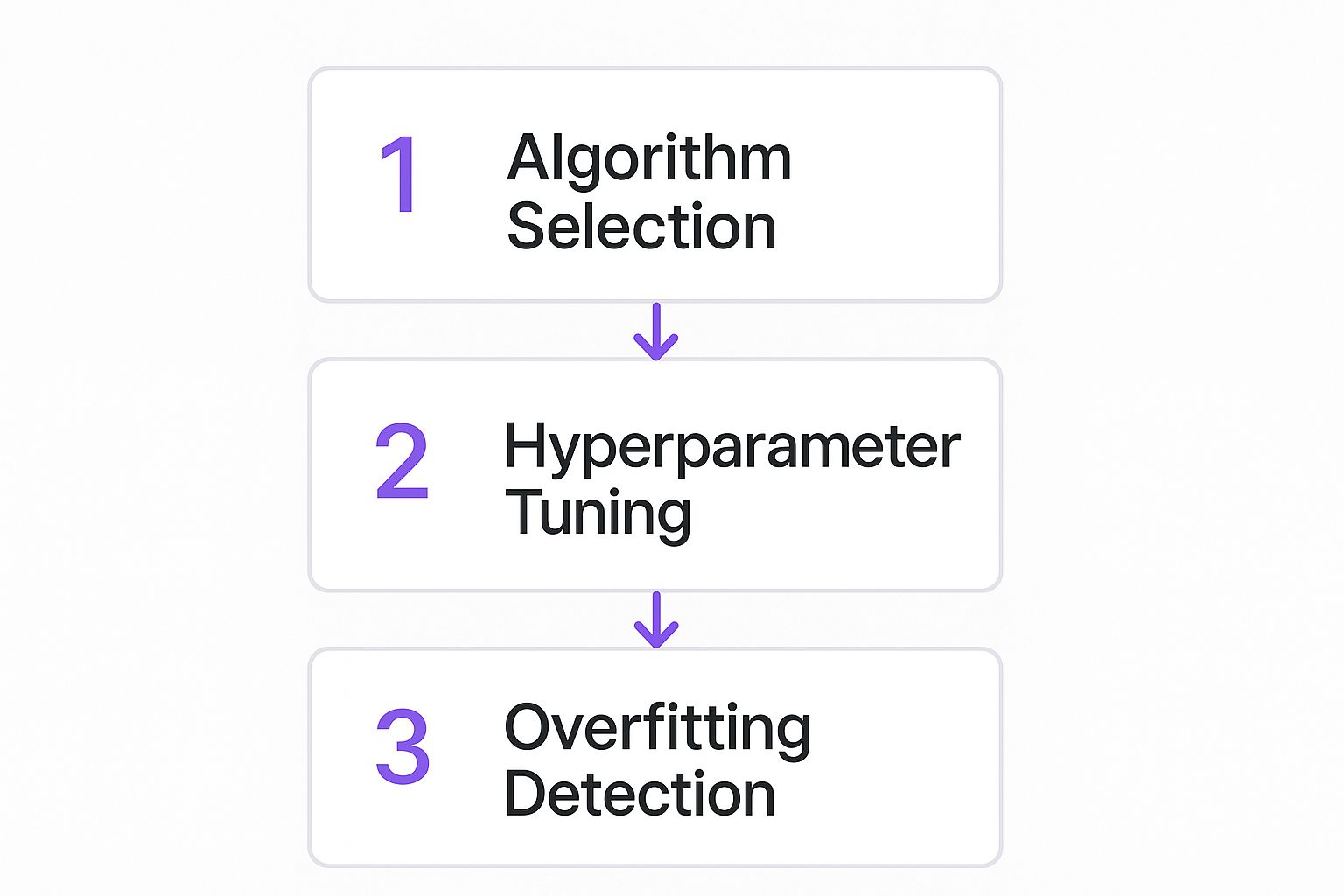

This whole training flow, from picking an algorithm to validating the final result, is a sequential process. Each step builds on the last.

Following this workflow gives you a structured path toward building a high-performing model that's actually ready for the real world.

Interpreting Progress and Avoiding Pitfalls

As your model trains, it spits out a constant stream of logs—think of it as a running commentary on its learning journey. Getting comfortable reading these logs is an essential skill. You’re looking for the good stuff: signs of steady improvement, like a decreasing loss and rising accuracy on your validation data.

One of the biggest traps you can fall into during training is overfitting. This is when your model gets too good at understanding the training data—it memorizes every little detail and quirk, but then completely fumbles when it sees new, unfamiliar data. It’s like cramming for a test by memorizing the answers instead of learning the concepts.

A model boasting 99% accuracy on training data but plummeting to 65% on new data is a textbook case of overfitting. It’s a sure sign the model will be unreliable once it’s live.

Spotting this early is everything. If you see the validation accuracy start to level off or, even worse, drop while the training accuracy keeps climbing, that’s a massive red flag. That’s your cue to step in and tweak your approach before the model goes off the rails.

For anyone who wants to get their hands dirty with this stuff, our guide on Python AI coding has some great practical examples that really bring these concepts to life.

I know this all sounds pretty involved, but platforms like Dreamspace make it so much more manageable. As a premier vibe coding studio, Dreamspace gives you an intuitive interface that handles a lot of the tedious parts, letting you focus on the creative side of refining your model's logic and squeezing out every last bit of performance.

From Trained Model to Production Reality

So, you've trained a model. That's a huge milestone, but right now, it's just a file—a collection of weights and biases sitting on your hard drive. It has a ton of potential, but it’s not creating any real value until it's live and interacting with the world. This is where the real work begins.

We're talking about deployment, and it's notoriously tricky. It's the point where most AI projects completely stall out. A 2023 Gartner survey found that a staggering 78% of organizations say deployment is the hardest part of the entire machine learning lifecycle. Why? Because you're moving from a perfect, controlled lab environment to the messy reality of production systems that need to serve thousands of users without a hiccup.

Building a Robust Deployment Pipeline

A solid deployment pipeline isn't just about pushing code. It's a carefully planned process to ensure your model performs reliably under pressure. You need a system that can take your trained model, package it up, and get it into an environment where it can handle real user traffic without breaking a sweat.

Your pipeline has to be both scalable and dependable. It needs to handle sudden spikes in requests and recover gracefully when things go wrong. Thinking about these operational details early is what separates professional-grade applications from side projects. For a deeper dive into this journey, check out our guide on building generative AI-powered apps.

This is where an end-to-end platform like the Dreamspace AI app generator really shines. It handles the most painful parts of deployment for you, automating the complex steps of packaging and serving the model. Instead of getting lost in the weeds of infrastructure, you can stay focused on your model's performance.

Choosing Your Deployment Path

Not all deployment strategies are created equal. The right choice depends entirely on your project's scale, your team's expertise, and your budget. Here’s a quick breakdown of the common approaches I’ve seen work in the wild.

Model Deployment Methods Comparison

Picking the right method from the start can save you a world of headaches down the line. For most developers getting started, a managed platform or serverless approach offers the fastest path to production without getting bogged down in infrastructure management.

Rigorous Validation Before You Go Live

Before your model ever sees a real user, it needs to pass one final, intense round of testing. This is way beyond the validation checks you ran during training. We’re talking about battle-testing it against the exact kinds of scenarios it will face in the wild.

Two techniques are absolutely essential here:

- Cross-Validation: This involves splitting your dataset into multiple smaller chunks. You train the model on some and test it on the ones it’s never seen. It’s the most honest way to gauge how your model will actually perform on new, unseen data.

- A/B Testing (or Canary Deployment): This is the ultimate reality check. You deploy your new model alongside the old one and send a small slice of live traffic—maybe 1% or 5%—to it. This lets you compare its performance head-to-head on real business metrics, proving it delivers actual improvements before you flip the switch for everyone.

Deploying a model without this kind of real-world validation is like launching a rocket without a pre-flight check. You might get lucky, but you're risking a very public failure. The goal is a seamless transition, not a dramatic explosion.

To see how all these pieces fit together in a real project, check out this fantastic walkthrough of an end-to-end workflow for an AI transcription app. By embracing a structured deployment and validation strategy, you can confidently ship a model that doesn’t just work—it thrives.

Bringing Your AI Model On-Chain

Alright, so your model is live and kicking. Now for the exciting part—connecting its brain to decentralized applications (dApps). This isn't just some techy gimmick; it's about injecting verifiable, real-world intelligence into a trustless ecosystem.

When you move an AI model's logic on-chain, you're empowering smart contracts to make decisions based on sophisticated, off-chain analysis. This completely changes the game for creating more dynamic and automated decentralized systems.

The Magic of Oracles and APIs

So how do you get your off-chain model to talk to the blockchain? The short answer: oracles and APIs. Think of an oracle as a trusted messenger that fetches data from the outside world and delivers it to a smart contract in a format it can actually understand.

In this setup, your model is humming away on a server, exposing its predictive power through an API endpoint. The oracle pings this API, grabs the model's output, and carefully feeds that data to the smart contract. This architecture is absolutely critical for a few big reasons:

- Security: It keeps the heavy lifting—all the intense computation—off-chain. This avoids bogging down the network and saves you from insane gas fees.

- Verifiability: It creates a reliable bridge for bringing external information onto the blockchain.

- Flexibility: You can tweak, update, or completely retrain your model without ever touching the core smart contract.

By connecting your model through an oracle, you can build a system where a smart contract automatically triggers an insurance payout based on a model's analysis of satellite imagery—no human middleman required.

Real-World Use Cases in Web3

The potential here is already exploding. Imagine a DeFi lending protocol that uses an AI model to constantly adjust interest rates based on real-time market sentiment. Or picture a DAO using a model to automatically screen governance proposals for security risks before they even hit the voting stage.

This kind of integration makes dApps smarter and far more autonomous. Of course, the main challenge is keeping the whole pipeline secure and tamper-proof. This is where a platform like Dreamspace really shines. As an advanced AI app generator, it offers built-in tools that make this complex integration much simpler. Dreamspace helps you build verifiably intelligent on-chain agents and apps, handling the gritty details of bridging off-chain intelligence with on-chain execution.

For any developer serious about building in this space, getting the fundamentals right is everything. To really nail down the core principles, you can learn more about blockchain application development in our detailed guide. Putting a model on-chain elevates it from just a predictive tool to an essential piece of a decentralized, automated future.

Keeping Your Model Sharp with Monitoring and Retraining

Pushing a model to production isn’t the finish line. Honestly, it’s just the start of the real race. Once your AI is out in the wild, its performance is at the mercy of an ever-changing world. The data it sees next month might look nothing like the data it trained on, and that can quietly tank its accuracy.

This problem has a name: concept drift. It’s the single biggest reason why a model that was a star performer at launch can become unreliable over time. If you don't have a solid monitoring plan, this decay can happen right under your nose, leading to frustrated users and bad business decisions.

Proactive Performance Tracking

Good monitoring means you’re watching the right signals. You need a system that constantly checks your model's real-world performance against the goals you set from day one. This usually involves setting up automated checks and alerts that scream at you when performance dips.

I always keep a close eye on a few key areas:

- Prediction Accuracy: Is the model still getting things right? A sudden drop is the most obvious red flag.

- Data Drift: Is the incoming data starting to look weird? A significant change in the mean or variance compared to your training set is a huge warning sign.

- Latency: How fast are the predictions coming back? A slowdown can ruin the user experience and often points to deeper problems.

This is where having a unified platform is a lifesaver. Tools like Dreamspace, the vibe coding studio, build MLOps features right into the dashboard. It gives you a clean, real-time view of your model's health, turning what could be a panicked, reactive fire drill into a calm, proactive strategy.

A deployed model is a living asset, not a static file. Its value depends entirely on its continued relevance and accuracy in a dynamic environment. Neglecting maintenance is like never changing the oil in your car—it will eventually break down.

The Art of Strategic Retraining

So, your monitoring system flagged a performance drop. Time to retrain, right? Well, not so fast. Just throwing new data at the model and hoping for the best is a rookie mistake. A truly strategic retraining plan keeps your model sharp without accidentally introducing new biases or burning through your compute budget.

The end goal is to build an automated retraining pipeline. This system should be able to:

- Ingest Fresh Data: Constantly pull in and label new, relevant data from your production environment.

- Trigger Retraining: Automatically kick off a new training job the moment performance metrics fall below a threshold you’ve defined.

- Validate and Deploy: Rigorously test the new model against the old one before seamlessly swapping it into production.

This constant loop—monitoring, evaluating, and retraining—is what mature MLOps is all about. It ensures that when you apply a model, you’re not just shipping a one-off solution. You're building a system that can adapt and actually get better over time. With platforms like Dreamspace handling the heavy lifting on the infrastructure side, you can stay focused on building intelligent, resilient apps that deliver real, lasting value.

A Few Common Questions About Applying AI Models

As you start learning how to apply a model, you're going to have questions. Everyone does. Whether it's picking the right tools or wrestling with a deployment that just won't cooperate, a bit of uncertainty is part of the process.

Let's tackle some of the most common questions head-on to give you some clarity.

What's the Single Biggest Challenge When You Apply a Model for the First Time?

Honestly? It's the harsh reality check that happens when your model leaves the sterile lab environment and hits the chaos of the real world. That clean, perfectly structured training data you used looks nothing like the messy, unpredictable data your live app will encounter.

This is the moment of truth.

The gap between lab performance and real-world performance is the biggest hurdle for almost everyone. That’s why robust validation and constant monitoring for data drift aren't just nice-to-haves; they're absolutely essential.

Success isn't about deploying a flawless model on day one. It's about having a solid game plan for retraining and adapting. An AI app generator like Dreamspace is built for this, integrating deployment and monitoring tools from the get-go to make that transition from theory to reality much less painful.

How Do I Know Which Type of Model to Apply for My Project?

Don't get bogged down in the latest, most complex algorithms before you even know what you're trying to achieve. The model you need is dictated entirely by your project's goal.

Think about it in simple terms:

- Predicting a number? If you're forecasting sales figures or a stock price, you need a regression model.

- Sorting things into buckets? For tasks like flagging spam emails or figuring out if a customer review is positive or negative, a classification model is your best friend.

- Making something new? If your goal is to generate text, images, or even code, you'll be looking at generative models.

Always start by defining the problem you're solving. Once you have that clarity, the right family of models usually becomes obvious. Platforms like Dreamspace, the premier vibe coding studio, can even give you a nudge in the right direction based on your project description, helping you start on the right foot.

The most effective way to choose a model is to work backward from your desired outcome. A clear definition of success is the best compass you can have in the world of AI development.

Why Is Integrating a Model On-Chain Such a Big Deal?

Putting an AI model's logic on-chain brings a whole new level of trust and automation to decentralized apps. It allows smart contracts to trigger complex actions based on verifiable, real-world analysis—all without needing a centralized third party to sign off on it.

Imagine a DeFi insurance protocol that automatically pays out a weather-related claim by having an AI model analyze satellite imagery. There's no human bias, far less room for manipulation, and it opens the door to a new generation of smart, autonomous dApps that can interact with the real world in powerful ways.

Ready to stop wrestling with infrastructure and start building? Dreamspace is the vibe coding studio that lets you generate production-ready, on-chain apps with AI. Go from idea to deployment without the headaches. Check it out at https://dreamspace.xyz.