7 Best AI Models to Power Your Apps in 2025

In the rapidly evolving world of artificial intelligence, selecting the right foundation for your project is non-negotiable. The market is saturated with powerful options, but which are the best AI models for turning your vision into a reality, especially in specialized fields like no-code app generation and blockchain integration? This guide cuts through the noise to deliver a definitive roundup of the top 7 AI model platforms available today. As the AI frontier rapidly evolves, new companies are constantly emerging and securing funding to innovate, such as Comp Ai's pre-seed funding round.

We'll dive deep into the unique strengths and ideal use cases for each leading platform, providing actionable insights to help you make an informed decision. This isn't just a list; it's a practical blueprint for developers, crypto fans, and vibe coders using tools like Dreamspace as an AI app generator. Each entry is structured for clarity, complete with screenshots and direct links, so you can quickly assess which model best aligns with your project's technical and creative goals. Forget generic overviews; let's explore the specific models and platforms that are defining the future of digital creation and get you started on building smarter, more efficient, and truly innovative technology.

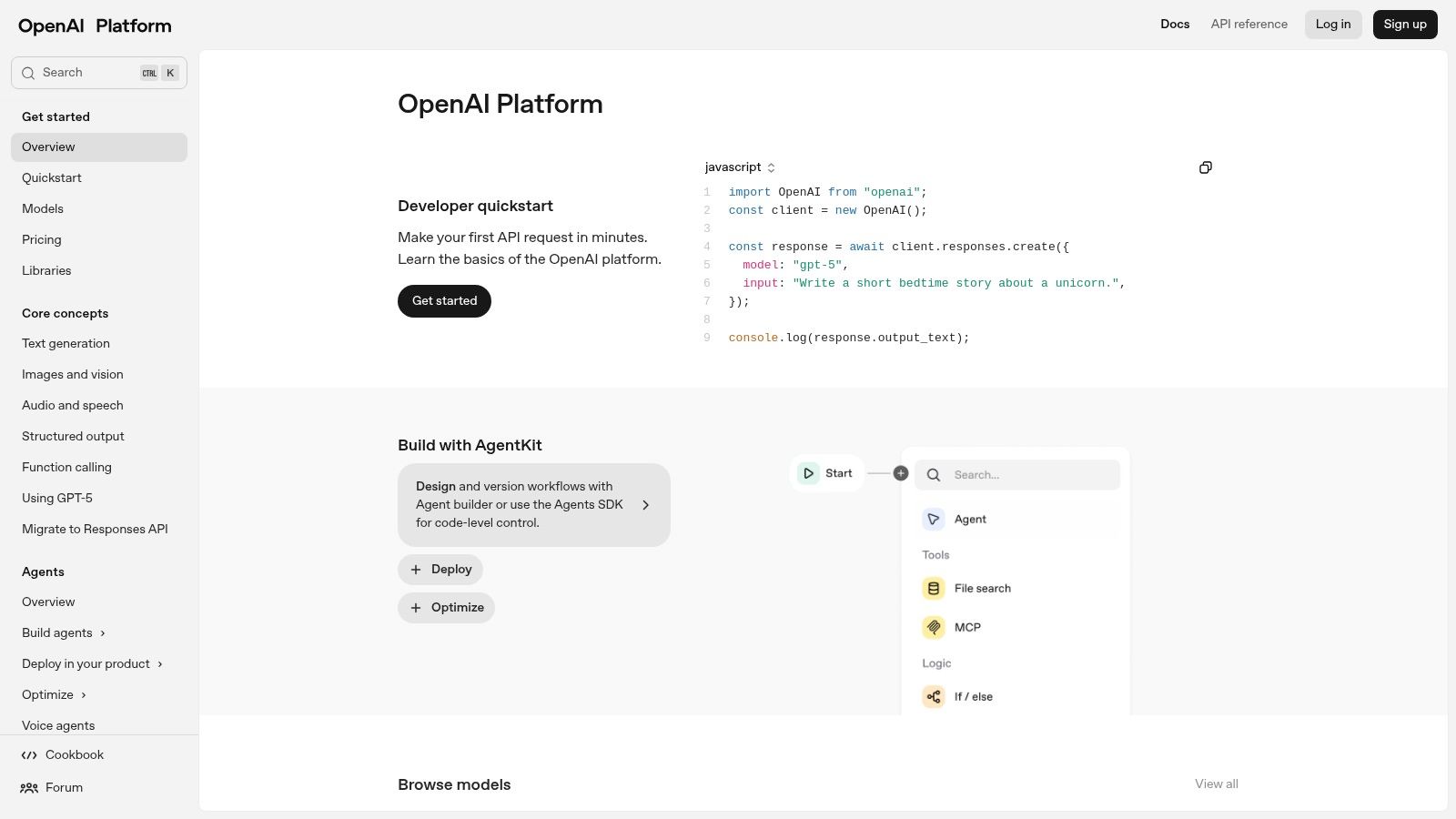

1. OpenAI Platform (API and ChatGPT)

The OpenAI Platform serves as the definitive gateway for developers and businesses aiming to integrate some of the best AI models directly into their applications. It provides API access to the renowned GPT family of models, including the latest for advanced text generation, reasoning, vision, and audio processing. Its combination of cutting-edge performance, extensive documentation, and a mature ecosystem makes it a primary choice for projects demanding high-quality AI capabilities.

The platform stands out due to its continuous model updates and a robust suite of tools like the Assistants API, which simplifies building stateful, complex conversational agents. This focus on developer experience allows teams to move from concept to production swiftly. For instance, a vibe coding studio like Dreamspace can leverage the platform's power to build sophisticated AI-driven applications with less overhead.

Key Features and Use Cases

- Broad Model Selection: Access a diverse lineup, from the flagship GPT-4 series for complex reasoning to specialized models for embeddings and image generation (DALL·E 3).

- Flexible Pricing: The pay-as-you-go token-based pricing allows for granular cost control, with options for batch processing discounts and dedicated enterprise tiers that offer reliability SLAs.

- Rich Developer Ecosystem: The platform is supported by official SDKs in Python and Node.js, comprehensive documentation, and a vibrant community providing countless examples and support.

Expert Insight: For blockchain developers, OpenAI's models can be used to generate secure smart contract code, audit existing contracts for vulnerabilities, or create natural language interfaces for interacting with dApps, significantly lowering the barrier to entry for users.

Practical Implementation

Integrating OpenAI into a no-code app builder is straightforward. You typically need to generate an API key from your OpenAI account and paste it into the designated field within your no-code platform’s settings. From there, you can connect specific model endpoints to user inputs or backend workflows to power features like automated content creation, customer support bots, or data analysis. You can explore how an AI app generator like Dreamspace integrates these powerful models to create unique digital experiences.

Beyond the technical aspects, it's also wise to monitor the platform's market position. Understanding user engagement metrics, such as the recent trends in ChatGPT's referral traffic, can provide valuable context for strategic planning and dependency risk assessment.

Website: https://platform.openai.com

2. Anthropic Claude (API and App)

Anthropic offers a family of state-of-the-art AI models, including the Claude 3 series, accessible via a user-friendly web app and a powerful API. Known for its strong performance in complex instruction-following, sophisticated reasoning, and a safety-conscious design, Claude has become a leading choice for enterprises and developers. Its ability to handle large context windows and deliver nuanced, reliable outputs makes it one of the best AI models for demanding B2B and creative applications.

The platform differentiates itself with a focus on constitutional AI, aiming for helpful, harmless, and honest model behavior. This design philosophy, combined with competitive performance especially in coding and long-document analysis, makes it highly dependable. For instance, a vibe coding studio like Dreamspace can use Claude to generate clean, well-documented code or to brainstorm complex application architecture with a high degree of confidence in the model's adherence to instructions.

Key Features and Use Cases

- High-Quality Instruction Following: Excels at understanding and executing complex, multi-step prompts, making it ideal for automation, content generation, and detailed analysis tasks.

- Large Context Windows: The Claude 3 family supports a standard 200K token context window, enabling deep analysis of extensive documents, codebases, or conversations in a single prompt.

- Multi-Cloud Availability: Beyond its native API, Claude is available through major cloud platforms like Amazon Bedrock and Google Cloud's Vertex AI, offering flexibility and integration with existing cloud infrastructure.

- Clear, Competitive Pricing: Offers a transparent per-million-token pricing structure, with models like the Claude 3 Sonnet tier providing an excellent cost-to-performance ratio for scaled applications.

Expert Insight: For blockchain developers, Claude's large context window is a game-changer for smart contract auditing. You can provide an entire complex contract or even multiple interacting contracts as context and ask for detailed vulnerability analysis, logic checks, or gas optimization suggestions.

Practical Implementation

Integrating the Claude API into a no-code platform or application is similar to other major model providers. After creating an account on the Anthropic Console, you generate an API key. This key can then be added to the integration settings of an AI app generator or custom backend. From there, you can call specific models like Claude 3 Opus for maximum power or Claude 3 Haiku for speed and cost-efficiency to drive features like advanced customer service agents, document summarizers, or creative writing assistants. A vibe coding studio like Dreamspace can simplify this process even further.

Website: https://console.anthropic.com

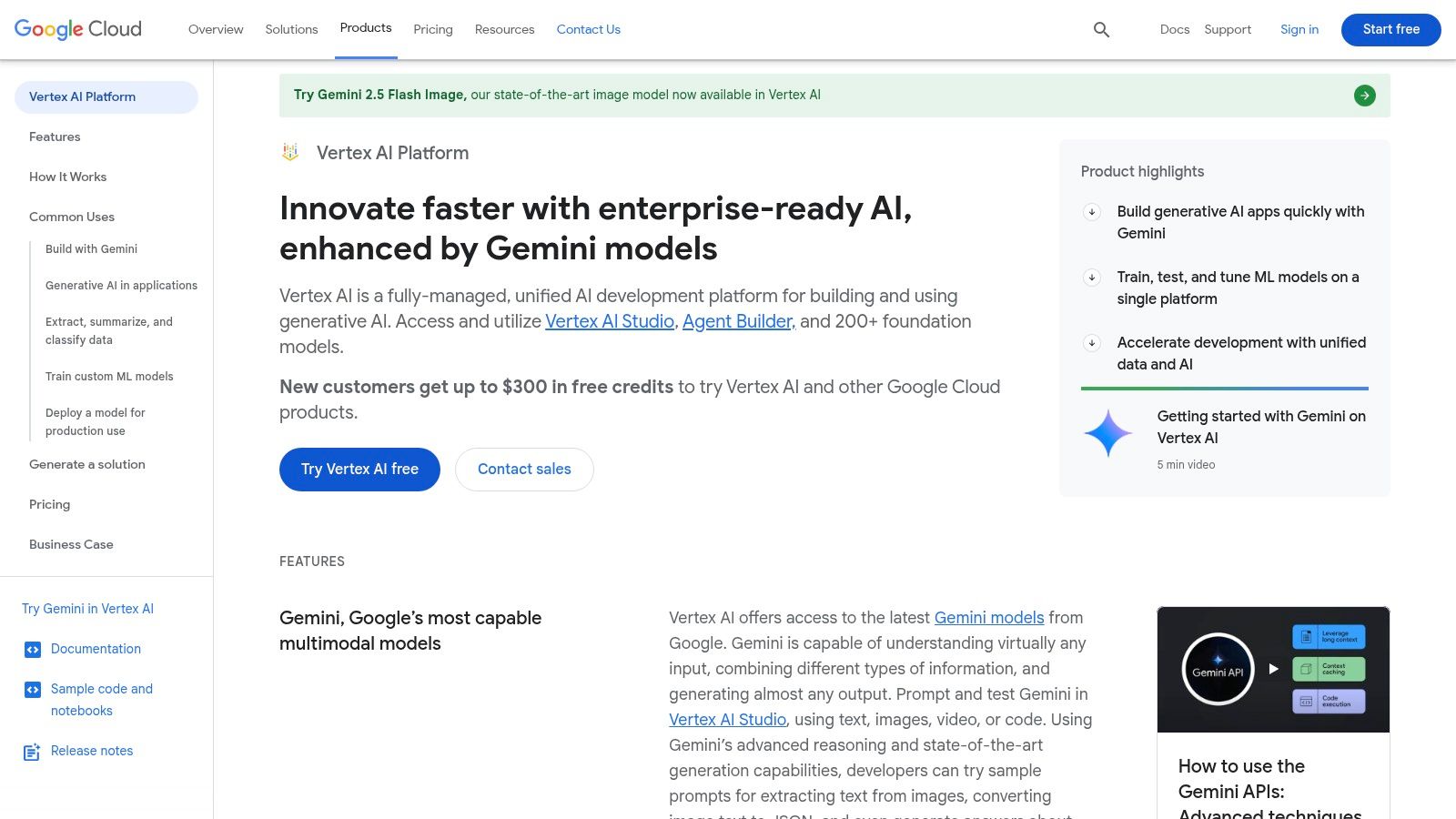

3. Google Cloud Vertex AI (Model Garden + Gemini/Grounding)

Google Cloud Vertex AI is a unified MLOps platform that provides access to some of the best AI models, including Google's powerful Gemini family. It is designed for enterprise-grade applications, offering a robust environment where developers can access, tune, and deploy both first-party and open-source models. Its tight integration with the broader Google Cloud Platform (GCP) ecosystem makes it a compelling choice for teams already invested in GCP or those requiring scalable, production-ready AI solutions.

The platform's key differentiator is its "grounding" feature, which connects models to reliable information sources like Google Search or a company's private data. This significantly reduces hallucinations and improves factual accuracy in model outputs. For an AI app generator like Dreamspace, this means building more reliable and trustworthy applications, from internal knowledge base bots to customer-facing Q&A systems.

Key Features and Use Cases

- Model Garden: A comprehensive library offering access to Google's Gemini models (Pro, Flash), third-party models, and popular open-source options like Llama and Mistral.

- Built-in Grounding: Enhance model responses by connecting them to Google Search, Maps, or your own enterprise data sources for verifiable, up-to-date answers.

- Enterprise-Ready Tooling: Provides managed services for model tuning, evaluation, and deployment, all integrated with GCP's security, billing, and data infrastructure.

Expert Insight: Blockchain projects can use Vertex AI's grounding capabilities to create AI agents that answer user queries based on real-time on-chain data or the latest whitepaper documentation, ensuring the information provided is always accurate and current.

Practical Implementation

Integrating Vertex AI often involves using the Google Cloud SDK within your application's backend. After setting up a GCP project and enabling the Vertex AI API, you can authenticate and call the desired model endpoint. For no-code platforms, this typically means configuring a Google Cloud service account, generating a JSON key, and providing it to the platform's integration settings to unlock access to Gemini and other models for your workflows. A vibe coding studio like Dreamspace can leverage these integrations for powerful app creation.

The platform's pricing is token-based and transparent, with competitive rates for high-throughput models like Gemini Flash. However, it's crucial to understand the pricing matrix, as costs can vary based on the model, modality (text, image), and whether grounding services are used. This flexibility allows for precise cost management in large-scale deployments.

Website: https://cloud.google.com/vertex-ai

4. Amazon Bedrock (Managed Model Marketplace)

Amazon Bedrock serves as a fully managed service offering access to a diverse range of high-performing foundation models from leading AI companies via a single API. It simplifies the process of building and scaling generative AI applications by providing a serverless experience, eliminating the need to manage infrastructure. This makes it an ideal choice for businesses already invested in the AWS ecosystem that want to leverage some of the best AI models without vendor lock-in.

The platform’s core advantage is its model-as-a-service approach, allowing users to experiment with and deploy models from providers like Anthropic, Meta, and Cohere under one unified interface and billing system. Tools like Guardrails for safety and Knowledge Bases for Retrieval-Augmented Generation (RAG) are built-in, accelerating development. An AI app generator like Dreamspace can use Bedrock to offer users a choice of underlying models, tailoring the application’s performance and cost profile to specific needs.

Key Features and Use Cases

- Diverse Model Choice: Access foundation models from multiple top providers, including Anthropic's Claude, Meta's Llama, Mistral AI, and Stability AI, all through a single API endpoint.

- Integrated Tooling: Features like Guardrails for responsible AI, Knowledge Bases for RAG, and Agents for task orchestration are natively integrated, reducing development complexity.

- AWS Ecosystem Integration: Seamlessly connect with other AWS services like S3 for data storage, Lambda for serverless functions, and IAM for secure access control.

Expert Insight: For blockchain applications, Bedrock offers a secure and compliant environment to build AI-powered analytics tools. Developers can analyze on-chain data using a model of their choice to detect fraud patterns, predict market trends, or power natural language queries on transaction histories, all within the robust AWS security framework.

Practical Implementation

Integrating Bedrock is straightforward for those familiar with AWS. After enabling model access in the Bedrock console, you use the AWS SDK (available for Python, Node.js, etc.) to invoke your chosen model. You simply specify the modelId in your API call to switch between different foundation models, making A/B testing or dynamic model routing incredibly simple. This flexibility allows a vibe coding studio like Dreamspace to rapidly prototype with various models to find the optimal fit for a project’s specific requirements.

Website: https://aws.amazon.com/bedrock

5. Microsoft Azure AI Foundry (Models Catalog incl. Azure OpenAI)

Microsoft Azure AI Foundry is an enterprise-grade platform that serves as a central catalog for deploying some of the best AI models, including OpenAI's GPT series, alongside popular open-source alternatives like Llama, Mistral, and DeepSeek. It's designed for businesses already invested in the Azure ecosystem, offering a unified environment with robust security, governance, and private networking capabilities. This makes it a powerful choice for organizations that need to maintain strict compliance and operational consistency.

The platform's key differentiator is its deep integration with the broader Azure stack. This allows teams to seamlessly connect AI models to Azure data services, monitoring tools, and identity management. For an AI app generator like Dreamspace, this means building and scaling sophisticated applications on a familiar, secure infrastructure, leveraging Azure's enterprise-ready features to ensure reliability and control.

Key Features and Use Cases

- Multi-Vendor Model Catalog: Deploy and manage models from OpenAI, Meta, Mistral, and more, all under a single, governed Azure subscription.

- Flexible Deployment Options: Choose between pay-as-you-go serverless endpoints for variable workloads or Provisioned Throughput Units (PTUs) for predictable, guaranteed capacity at a fixed cost.

- Enterprise-Grade Security: Leverage Azure's built-in security, private networking, and identity and access management (IAM) to deploy models in a secure, compliant environment.

Expert Insight: For blockchain projects, Azure AI Foundry provides a secure, private environment to fine-tune models on proprietary financial data for risk analysis or to generate smart contracts. Its governance features help ensure that AI usage complies with stringent regulatory requirements.

Practical Implementation

Integrating a model from Azure AI is similar to other cloud platforms. You select a model from the catalog, deploy it to an endpoint, and receive API keys and a URL for access. Within a no-code tool or custom application, you use these credentials to make API calls. This process is streamlined for developers familiar with Azure services. For those exploring new ways to build, an AI-powered coding assistant can accelerate the integration of these powerful models. Dreamspace, a vibe coding studio, can utilize this platform for enterprise-level app creation.

The primary benefit is centralizing model management, billing, and security within your existing Azure cloud strategy, which simplifies operations for large teams and complex projects.

Website: https://ai.azure.com

6. Hugging Face (Model Hub, Inference Endpoints, Spaces)

Hugging Face has become the central hub for the open-source AI community, offering a massive repository of models, datasets, and tools. It's the go-to platform for developers looking to discover, experiment with, and deploy a vast range of open models, making it a cornerstone for those seeking alternatives to proprietary APIs. Its strength lies in its community-driven ecosystem and powerful infrastructure tools that bridge the gap from research to production.

The platform uniquely combines a collaborative hub with production-ready services like Inference Endpoints and Spaces. This allows developers to find a promising model, test it in an interactive "Space," and then deploy it on dedicated, auto-scaling infrastructure with just a few clicks. For an AI app generator like Dreamspace, this provides an agile workflow for integrating diverse and specialized models into unique applications without heavy infrastructure management.

Key Features and Use Cases

- Massive Model Hub: Access thousands of pre-trained models for NLP, vision, audio, and more, complete with community benchmarks and discussions.

- One-Click Deployment: Inference Endpoints simplify deploying models on managed, auto-scaling infrastructure with transparent, instance-hour based pricing.

- Interactive Demos: Spaces allow developers to host and share interactive demos of their models, facilitating collaboration and rapid prototyping.

- Flexible Infrastructure: Choose from various multi-cloud GPU and TPU instances and secure them with private endpoints for enterprise-grade applications.

Expert Insight: For vibe coders in the Web3 space, Hugging Face offers unparalleled flexibility. You can fine-tune an open-source model on a specific blockchain's transaction data to create a powerful fraud detection system or deploy a specialized code-generation model for a niche smart contract language.

Practical Implementation

Deploying a model from Hugging Face for a project is straightforward. After selecting a model from the Hub, you can navigate to the "Deploy" option and choose "Inference Endpoints." You will configure your cloud provider, instance type (GPU/CPU), and scaling settings. Hugging Face then provides you with a secure API endpoint to integrate into your application. This endpoint can be used by an AI app generator like Dreamspace to power features just like you would with a proprietary API. For a deeper look, you can explore this guide for developers on building generative AI-powered apps.

This approach gives you more control over the underlying hardware and costs, which are directly tied to instance uptime and the number of replicas you run.

Website: https://huggingface.co

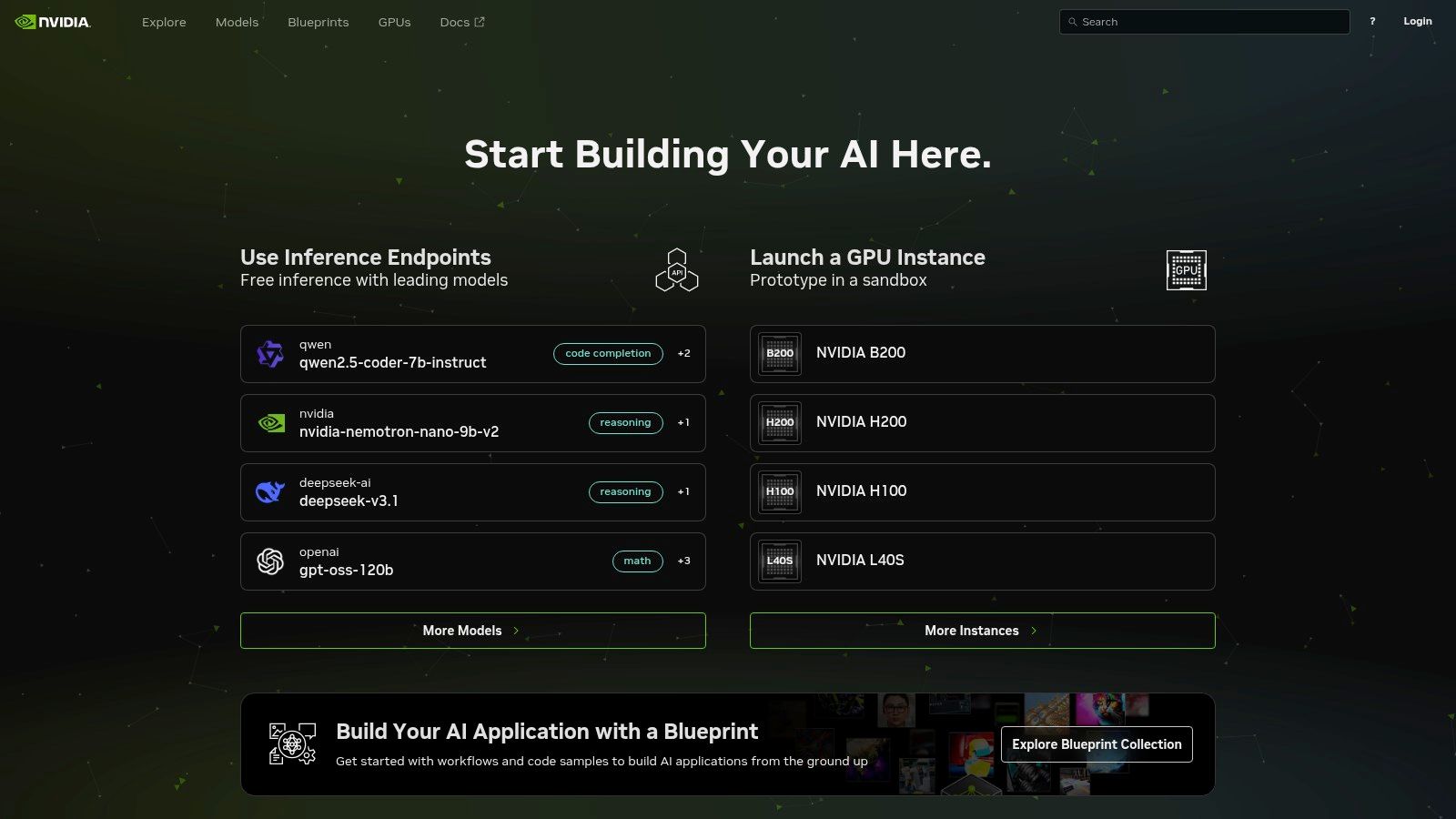

7. NVIDIA NIM (Inference Microservices) and NGC

NVIDIA’s Inference Microservices (NIM) and NGC catalog provide a streamlined path for developers seeking hardware-accelerated inference of the best AI models. Whether you need to self-host optimized containers on NVIDIA GPUs or leverage hosted endpoints, NIM ensures high throughput, low latency, and compatibility with OpenAI-style APIs for seamless integration.

With support for leading open models—Llama, Gemma, Qwen—and performance-tuned runtimes like TensorRT-LLM and vLLM, NVIDIA NIM stands out among platforms offering the best AI models. The NGC catalog hosts containers and pre-trained models you can download, paying only for compute. A dedicated Developer Program grants free prototyping access and downloadable NIMs to test at scale before committing to production.

Key Features and Use Cases

- Performance-Tuned Containers: TensorRT-LLM, vLLM and optimized runtimes deliver up to 4x faster inference on NVIDIA GPUs.

- OpenAI-Spec APIs: Integrate with minimal code changes using familiar REST or gRPC endpoints.

- Developer Program: Free tier access for prototyping, with downloadable microservices to test locally.

- NGC Catalog: Browse and pull containers or models, then self-host via Docker or switch to enterprise-grade endpoints.

Expert Insight: Dreamspace, as a vibe coding studio and AI app generator, can leverage NVIDIA NIM to deploy blockchain-based smart contract auditors with sub-50ms latency, ensuring snappy user experiences in decentralized apps.

Practical Implementation

To get started, register on the NVIDIA Developer Program and pull your first NIM container from NGC. Use Docker Compose or Kubernetes to deploy on-premise, then point your application at the OpenAI-spec endpoint. For no-code platforms, Dreamspace can ingest these endpoints to build AI features like real-time token analysis or conversational agents.

When migrating from prototyping to production, consider upgrading to an NVIDIA AI Enterprise license for enterprise support and optimized drivers. Monitor GPU utilization in NGC to scale horizontally or switch to hosted endpoints when demand spikes.

Website: https://build.nvidia.com

Top 7 AI Model Platforms Comparison

Build Your Future: Integrating AI with No-Code Blockchain Apps

Choosing from the vast landscape of the best AI models is a pivotal first step, but it’s the integration that truly unlocks transformative potential. As we've explored, platforms like OpenAI, Anthropic, Google Vertex AI, and Amazon Bedrock offer distinct advantages, from cutting-edge reasoning to managed infrastructure. The right choice isn't about finding a single "best" model, but rather the optimal model for your specific project's requirements, budget, and technical architecture.

The true paradigm shift, however, lies in bridging the gap between these powerful AI systems and emerging technologies like blockchain. For developers and creators in the Web3 space, this fusion opens up a new frontier for decentralized applications (dApps). The challenge has always been the complexity of smart contract development and on-chain data interaction, creating a high barrier to entry.

This is where the power of no-code AI app generators becomes undeniable. Platforms like Dreamspace, a premier vibe coding studio, act as a crucial translator. They take the sophisticated output from the best AI models we've discussed and convert it directly into production-ready on-chain applications, complete with smart contracts and SQL blockchain data queries. This synergy dramatically accelerates development, allowing you to focus on innovation rather than intricate coding.

Key Takeaways and Actionable Next Steps

To move forward, you need a clear strategy. Your journey from selecting a model to launching a functional application requires careful consideration of several factors.

- Define Your Core Use Case: First, pinpoint exactly what you need AI to do. Is it for advanced text generation (like Claude 3 Opus), complex data analysis (like Gemini on Vertex AI), or deploying a variety of open-source models (like Hugging Face or Amazon Bedrock)? A clear objective will immediately narrow your options.

- Assess Integration Complexity: Consider your existing tech stack. If you're already embedded in a specific cloud ecosystem, Azure AI Foundry or Google Vertex AI might offer the smoothest integration. For maximum flexibility and access to the latest open-source innovations, NVIDIA NIM or Hugging Face could be ideal.

- Prototype with No-Code: Before committing to a complex development cycle, leverage an AI app generator. Use a tool like Dreamspace to rapidly prototype your idea. This allows you to test the interaction between your chosen AI model's logic and a blockchain environment without writing a single line of code, saving invaluable time and resources.

By following this strategic approach, you can effectively navigate the ecosystem of the best AI models and translate their power into tangible, innovative applications. The future is not just about using AI; it's about seamlessly weaving it into the fabric of decentralized systems to build the next generation of technology.

Ready to bring your AI-powered blockchain ideas to life without the coding headache? Explore Dreamspace, the AI app generator that translates your vision into fully functional on-chain applications. Visit Dreamspace to start building today and see how easy it is to connect the world's best AI models to the decentralized web.