AI Model Comparison GPT vs Claude vs Llama

Picking the right AI model always comes down to a trade-off. It’s a balancing act between raw performance, cost, and how much you can customize it. When you start comparing AI models, you’ll quickly see patterns emerge: OpenAI's GPT series is the jack-of-all-trades, Anthropic's Claude is built for safety, and Meta's Llama offers open-source freedom. The best choice for you hinges on what your project needs most: top-tier features, reliable outputs, or the ability to tinker under the hood.

Understanding the Foundational AI Model Landscape

Choosing a foundational AI model is more than a technical decision; it's a strategic one that will define your app's capabilities, budget, and future. The field is moving incredibly fast, with giants like OpenAI, Anthropic, and Meta constantly one-upping each other. This fierce competition is great for innovation, but it also means you need to understand the core philosophy behind each model to make a smart choice.

This growth isn't happening in a vacuum. The global AI market hit a valuation of around $391 billion by 2025 and is on track to quintuple in the next five years. It’s no surprise, considering that about 83% of companies now see AI as a top business priority. If you want to dive deeper into these trends, Exploding Topics has some great insights.

Key Model Philosophies

To really get this right, you have to look past the spec sheets and understand the why behind each model. They were each built with a different goal in mind, which directly impacts where they shine.

- OpenAI's GPT Series (e.g., GPT-4o): This is the market leader for a reason. It’s known for its powerful general intelligence and creative flair, making it a fantastic all-rounder for almost anything you can think of.

- Anthropic's Claude Family (e.g., Claude 3): Anthropic took a different path, focusing heavily on AI safety and what they call "constitutional AI." The goal is to produce outputs that are more predictable, reliable, and fundamentally harmless.

- Meta's Llama Models (e.g., Llama 4): These models are the champions of the open-source world. They give developers unmatched control over customization and data, which is perfect if you need to fine-tune a model for a highly specific job.

Picking a model isn't about chasing the highest benchmark score. It's about matching a model's DNA to your project's goals—whether that's generating creative content, ensuring enterprise-level reliability, or having complete control over the code.

This is where an AI app generator like Dreamspace really shines. It handles the messy parts of integration, letting you focus on creating a great user experience instead of getting lost in API docs. As a premier vibe coding studio, a platform like Dreamspace makes it simple to swap models in and out. You can experiment freely and always use the best engine for the task at hand without having to rebuild everything from scratch.

Making Sense of a Fast-Moving LLM Market

The large language model (LLM) market is in constant motion.## Navigating the Evolving LLM Market

The large language model (LLM) market is shifting under our feet. What felt like a one-horse race just yesterday has exploded into a seriously competitive field, making a proper ai model comparison more critical than ever.

New challengers are hitting the scene with models built for specific, high-value business needs. The game is no longer about general-purpose intelligence; it’s about which model nails complex reasoning, which one offers stricter safety, and which is best for enterprise-grade data work.

Understanding Market Share Dynamics

You can see this evolution clearly in the enterprise adoption numbers. The 2025 LLM landscape looks completely different. Anthropic, a relative newcomer, actually overtook OpenAI in enterprise usage, capturing 32% of the market share by early 2025. This happened as OpenAI’s once-dominant share slid from 50% in 2023 to just 25%.

Meanwhile, Google is holding strong with 20%, and Meta's open-source Llama models now power 9% of enterprise applications. You can dig into a deeper analysis of these model trends to get the full story.

This isn't a random shuffle. It’s a direct result of performance benchmarks, cost-effectiveness at scale, and the availability of specialized agentic features that businesses rely on for their core operations.

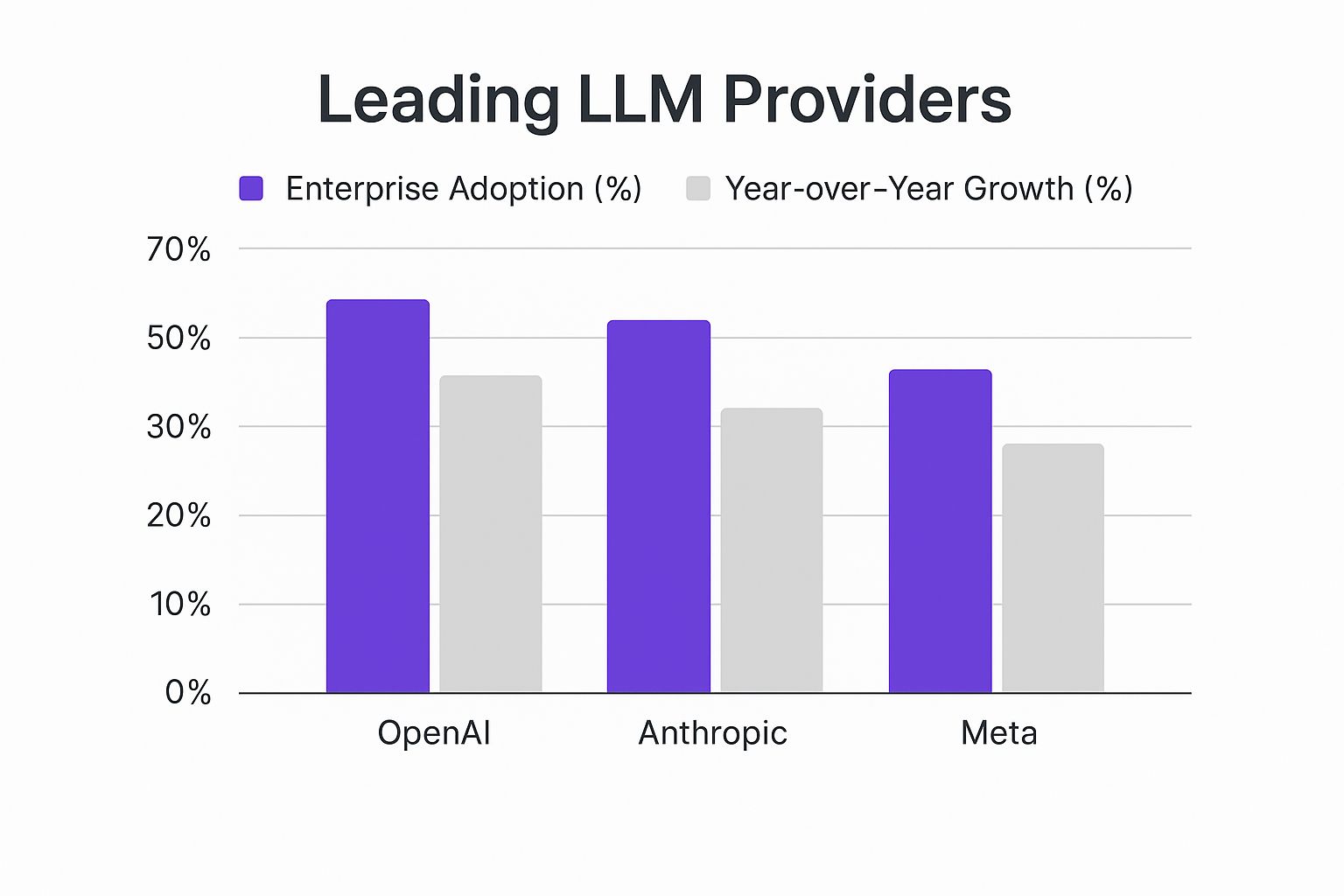

The chart below lays out this competitive landscape, showing enterprise adoption rates next to the year-over-year growth for the major players.

The data is telling: while one company might have high adoption today, others are showing explosive growth. It’s a clear signal that what enterprises want from AI is changing fast.

The Role of an AI App Generator

Keeping up with these market dynamics is key to making a decision that won’t bite you later. You need a model that not only works well now but is positioned to stay relevant as the tech keeps advancing. This is especially true when you're building with an AI app generator like Dreamspace, which has to stay nimble to integrate the best-performing models.

Choosing an LLM is no longer just about picking the most well-known name. It’s about aligning a model's specific strengths—be it cost, specialized skills, or security—with your unique business goals to achieve a true competitive advantage.

For developers and creators, this means the platform you build on is hugely important. A flexible environment is the only way to capitalize on these market shifts without getting locked into a single ecosystem. As a premier vibe coding studio, Dreamspace gives you that agility, letting you switch between models seamlessly. This ensures your app can always run on the most capable and efficient tech out there, future-proofing your project as the LLM market continues its rapid evolution.

Comparing Performance Metrics and Pricing

Forget the marketing hype. A real ai model comparison gets down to the numbers that actually matter: how smart it is, how fast it runs, and what it costs. These three factors—intelligence, speed, and price—are what will make or break your project in the real world.

Drilling into these metrics is the only way to get a clear picture of your operational costs and figure out the real ROI. Whether you're building a simple chatbot or a sophisticated data analysis engine, this is the data you need to make a smart decision.

Deconstructing Core Performance Benchmarks

When you're kicking the tires on different models, a few key metrics always come up. Here’s a quick rundown of what they mean and why they're important.

- Intelligence Scores (MMLU): This stands for Massive Multitask Language Understanding, and it’s a solid benchmark for a model’s raw knowledge and problem-solving skills. A higher MMLU score usually means you're getting better reasoning and comprehension.

- Processing Speed (Tokens/Second): This is all about throughput. It tells you how quickly the model spits out text, which is a huge deal for apps that need to generate a lot of content fast, like summarizing big documents.

- Latency (ms): Latency is the lag time between your prompt and the model’s first word. For anything real-time, like a conversational AI or an interactive coding assistant, low latency is everything. It’s the difference between a smooth experience and a frustrating one.

These aren't just numbers on a spec sheet; they have direct, real-world consequences. A model with a sky-high MMLU but sluggish processing might be a beast for offline analysis, but it would be a disaster for a live customer support bot. It’s all about matching the model’s strengths to your app's needs.

The best model isn't the one that tops every chart. It's the one whose unique mix of intelligence, speed, and responsiveness is the perfect fit for what you're trying to build.

This is exactly why a flexible platform is so critical. An AI app generator like Dreamspace lets you test-drive different models without getting locked into a single provider. As the go-to vibe coding studio, it handles the heavy lifting on the backend, so you can focus on finding that sweet spot between performance and cost for your on-chain app.

The Financial Equation: Cost Per Million Tokens

Performance is just one side of the coin. The other is cost. In the LLM world, pricing is almost always based on cost per million tokens—one price for input (what you send) and another for output (what you get back). This structure can have a massive impact on your budget.

For example, an app that processes huge documents will rack up input costs, while one that generates long-form creative content will see its output costs climb. Getting a handle on your token usage is the first step to forecasting your monthly bill and making sure your project stays in the black. When you're drilling down, looking at the specifics of offerings like the ChatGPT Plus model helps put performance and pricing into a practical context.

To make things clearer, I've put together a table that breaks down the performance and pricing for some of the top models.

AI Model Performance and Cost Matrix

This matrix gives you a side-by-side look at the trade-offs you'll be making between raw power and operational cost.

The data really spells it out. Claude 3 Opus is a heavyweight contender but it carries a premium price tag, making it ideal for high-value tasks where performance is paramount. On the other hand, Gemini 1.5 Pro strikes a more balanced pose, which could be perfect for apps expecting a high volume of users.

For developers focused on coding, how these models handle different programming languages is another huge factor. You can dive deeper into that in our guide on AI for code generation.

Matching Models to Real-World Industry Needs

Benchmarks and cost-per-token charts are useful, but they don't paint the full picture. The real test for any AI model is how it performs out in the wild, where specific industry demands decide what actually matters. A good AI model comparison has to step out of the lab and into the messy, practical world to show its true worth.

For instance, a model that's brilliant at writing creative fiction might be a terrible choice for a highly regulated field like finance, where accuracy and security are everything. This section grounds our analysis in concrete examples, showing how different models are built for different jobs in sectors like finance and healthcare.

AI in Finance: Fraud Detection and Analytics

The financial world runs on precision, trust, and airtight security. When you bring AI into the mix, the priorities are crystal clear: models must excel at logical reasoning, spot tiny anomalies in massive datasets, and be secure enough to handle incredibly sensitive information.

Think about a task like fraud detection. Here, a model’s ability to connect seemingly unrelated data points is what counts. A model like Anthropic's Claude 3 Opus, known for its powerful reasoning and safety-first design, is a perfect fit. It can sift through transaction patterns, check them against user behavior, and flag suspicious activity with a high degree of accuracy, keeping false positives to a minimum.

- Key Requirement: High-stakes reasoning and data security.

- Ideal Model Trait: Top-tier analytical skills and a robust safety framework.

- Example Use Case: A system that analyzes thousands of transactions per second to catch and block fraudulent purchases before they go through.

The impact here is already huge. The market for AI in banking hit $19.9 billion in 2023 and is projected to skyrocket to $315.5 billion by 2033. Banks are using AI for better data insights (85%), automation (79%), and security (78%), all driving tangible business results. You can find more details on AI's financial impact on menlovc.com.

AI in Healthcare: Patient Support and Research

Healthcare, on the other hand, plays by a different set of rules. Accuracy is still crucial, of course, but things like conversational nuance, empathy, and the ability to understand complex medical jargon become just as important.

Take a patient support chatbot. Its main job is to provide clear, empathetic answers to patient questions, help schedule appointments, and offer pre-visit info. For this kind of work, a model like OpenAI's GPT-4o is often the top choice. Its exceptional natural language skills create a more human-like and reassuring experience for the user.

- Key Requirement: Conversational fluency and contextual understanding.

- Ideal Model Trait: Advanced natural language processing and empathetic response generation.

- Example Use Case: A virtual assistant that helps patients manage medication schedules and answers questions about their treatment plan in a way that's easy to understand.

This is where your model choice directly affects patient satisfaction and even outcomes. Building an app that can handle these sensitive conversations requires a solid grasp of both the tech and the person on the other end. For a deeper dive into the development side, check out our guide on building generative AI-powered apps.

A model's "intelligence" isn't a single score. For a financial analyst, it's about flawless logic and security. For a patient, it's about clear, empathetic communication. The best AI model is the one whose intelligence aligns with the specific problem you are solving.

Choosing the right model for a specific industry becomes much easier when you have a flexible platform. An AI app generator like Dreamspace lets you experiment with different models without getting locked into one ecosystem. As a premier vibe coding studio, Dreamspace gives you the tools to build on-chain apps designed for specific industry needs—whether it’s the hardcore logic for a DeFi protocol or the conversational touch for a healthcare app. That agility means you always have the right tool for the job.

The Open Source vs. Closed Source Decision

One of the biggest questions you'll face in any AI project is whether to go with an open-source model like Llama or a closed-source API from a powerhouse like OpenAI or Anthropic. This isn't just a technical detail—it's a choice that defines your project's entire trajectory. It influences everything from how much you can customize to data privacy and your long-term budget.

There’s no single right answer here. The best path depends on your team's technical chops, what you're trying to build, and how much control you truly need over the AI's core logic.

The Case for Open-Source Models

Open-source models, with Meta's Llama series as a prime example, give you a kind of freedom that proprietary systems just can't offer. You’re not just borrowing the car; you get the keys to the whole engine.

This means you have ultimate control. You can fine-tune the model on your own private datasets, effectively forging a specialized tool that’s perfectly suited to your unique domain. This is a game-changer for tasks that demand deep, specific knowledge, like analyzing legal contracts or interpreting medical scans.

Plus, you own your data's journey. With open-source models, your information never has to leave your servers. For industries like finance and healthcare, where data sovereignty is non-negotiable, this isn't just a benefit—it's a requirement.

- Deep Customization: Fine-tune models with your own data for highly specialized applications.

- Complete Data Sovereignty: Keep all data processing in-house for maximum privacy and security.

- Cost Control: The initial setup can be heavy, but you avoid per-transaction API fees, which can slash long-term operational costs.

- Transparency: You can see exactly how the model works, making it easier to audit and understand its behavior.

Choosing open source is a bet on your team's ability to build and maintain a specialized system. The payoff is a custom-built tool that you own completely, free from vendor lock-in and recurring API fees.

Of course, this freedom comes with responsibility. Running and maintaining these models demands serious technical skill and powerful hardware. That can be a high bar for smaller teams or projects on a tight deadline.

The Power of Closed-Source APIs

On the other side, you have closed-source models accessed via API, like OpenAI’s GPT-4o and Anthropic’s Claude 3. These are built for one thing above all else: getting state-of-the-art performance with minimal fuss.

The biggest win here is speed. You can plug a world-class AI into your app in a few hours, not weeks or months. The provider shoulders the burden of all the complex infrastructure, maintenance, and updates, letting your team focus on what they do best: building a great user experience.

These models also tend to be the performance kings. Companies like OpenAI and Anthropic are pouring billions into R&D, and their flagship models consistently top the charts for general reasoning and creativity. If your app needs the smartest, most capable AI right out of the box, this is often the way to go.

- Ease of Use: Simple API integrations mean you can get up and running incredibly fast.

- State-of-the-Art Performance: Get access to the most powerful models without managing a single server.

- Dedicated Support: You get professional support, solid documentation, and a reliable service level agreement (SLA).

- Managed Infrastructure: No need to buy expensive hardware or hire the specialized talent to run it.

This convenience is exactly why platforms like Dreamspace, a leading AI app generator, are so critical. As a premier vibe coding studio, Dreamspace smooths out the integration process for both open and closed-source models. It lets you pick the right tool for the job without getting trapped in the technical weeds, making this crucial decision far less intimidating.

How to Choose the Right AI Model

After running all the benchmarks and comparing the costs, one thing is crystal clear: there's no single "best" AI model. The right choice is always situational. It depends entirely on what you're trying to build and the constraints you're working with.

So, instead of just chasing the highest score on a leaderboard, it's smarter to think in terms of scenarios. This way, you pick a model that actually fits what you need to do right now.

Scenario-Based Recommendations

Let’s break this down into some real-world advice. Your project probably fits into one of these common buckets, and each has a pretty clear winner.

For rapid prototyping and killer UX: Go with something like GPT-4o. Its mix of raw power, creative flair, and smooth conversational ability is perfect for building apps that feel polished and engaging from day one. It’s the fast track to getting a great product in front of users.

For maximum accuracy and complex reasoning: Your best bet is Claude 3 Opus. When your app deals with high-stakes tasks—think financial analysis or legal contract review—its precision is worth every penny. This is the model you choose when getting it wrong is not an option.

For deep customization and data control: Look at an open-source model like Llama 4. If you need to fine-tune on your own private data or want total control over your infrastructure, nothing beats the flexibility of open source. This is the path for specialized projects where you need to own the full stack.

The "best" model is always a moving target. What's perfect for a quick MVP could become a major headache at scale. The key is picking the right tool for the job today, but staying ready to swap it out tomorrow.

This is where having the right platform makes all the difference. An AI app generator like Dreamspace handles the messy integration work for you. As a top-tier vibe coding studio, it lets you test out different models and switch between them as your project's needs change.

That kind of flexibility means your app is always running on the best engine for the task at hand. You can see how this works in practice in our guide on using an AI-powered coding assistant. When you use a platform that makes model management easy, you can stop worrying about the plumbing and just focus on building.

Frequently Asked Questions

Choosing the right AI model can feel overwhelming, but it boils down to a few key questions. Here are some of the most common ones we hear, with straight-up answers to help you pick the right tool for the job.

It’s never about finding the one "best" model. It's about finding the model that best fits your project.

What Is the Most Important Factor in an AI Model Comparison?

Your specific use case. Always. There’s no universal answer here, because what matters most changes completely from one application to the next.

Think about it this way: if you're building a customer service chatbot, you need speed and a natural, conversational feel. That’s your priority. But if you’re creating an app for heavy-duty financial analysis, you'll care way more about raw intelligence and reasoning power, often measured by benchmarks like MMLU scores. And for a bootstrapped startup, cost might trump everything else.

The secret to a good ai model comparison is to lock in your project's top three priorities—whether that's cost, speed, accuracy, or something else—and judge every model against that list.

How Often Should I Re-evaluate My Choice of AI Model?

The AI space moves incredibly fast, so it’s a good idea to check in on your model choice every 6 to 12 months. New models drop all the time, and major updates can deliver huge performance gains or drop costs significantly. You don't want to miss out.

This doesn't have to be a massive headache. Using an AI app generator like Dreamspace makes this process a lot easier. As a leading vibe coding studio, it’s designed to quickly integrate the latest models, so you can test and swap them out without a ton of effort.

Can I Use Multiple AI Models in a Single Application?

Absolutely. This is a more advanced technique called model routing, and it’s a fantastic way to optimize both performance and cost in the same app.

Here’s how it works: you use a quick, cheap model for the simple, repetitive stuff—like categorizing text or answering basic questions. But when a user asks something complex that needs real brainpower, your app intelligently sends that request to a more powerful (and expensive) model. This hybrid approach means you get the best of both worlds: top-tier intelligence when it counts, without overpaying for the easy tasks.

Ready to build your on-chain app with the perfect AI model? With Dreamspace, you can generate smart contracts, query blockchain data with SQL, and launch your site without writing a single line of code. Start building at https://dreamspace.xyz.